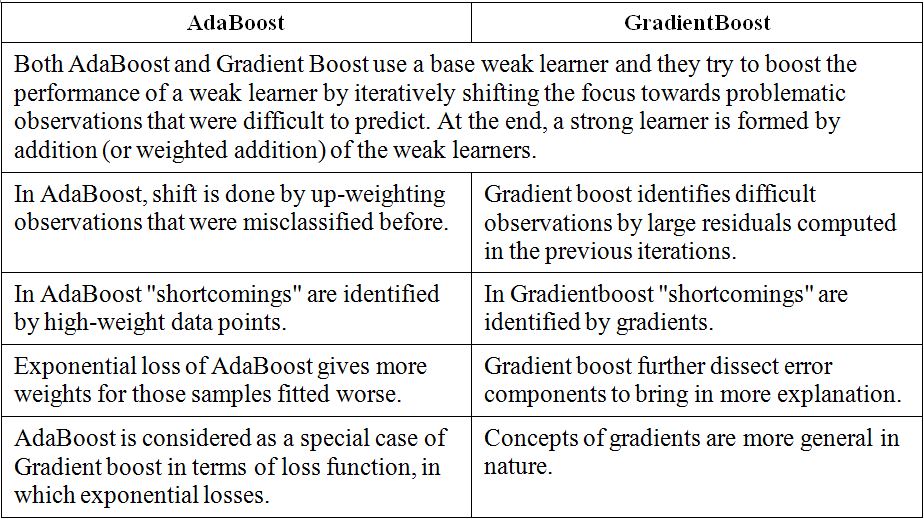

XGBoost delivers high performance as compared to Gradient Boosting. AdaBoost is the original boosting algorithm developed by Freund and Schapire.

The Structure Of Random Forest 2 Extreme Gradient Boosting The Download Scientific Diagram

Visually this diagram is taken from XGBoosts documentation.

. I think the difference between the gradient boosting and the Xgboost is in xgboost the algorithm focuses on the computational power by parallelizing the tree formation which one can see in this blog. It can be a tree or stump or other models even linear model. So whats the differences between Adaptive boosting and Gradient boosting.

GBM is an algorithm and you can find the details in Greedy Function Approximation. Lower ratios avoid over-fitting. Gradient boosting algorithm can be used to train models for both regression and classification problem.

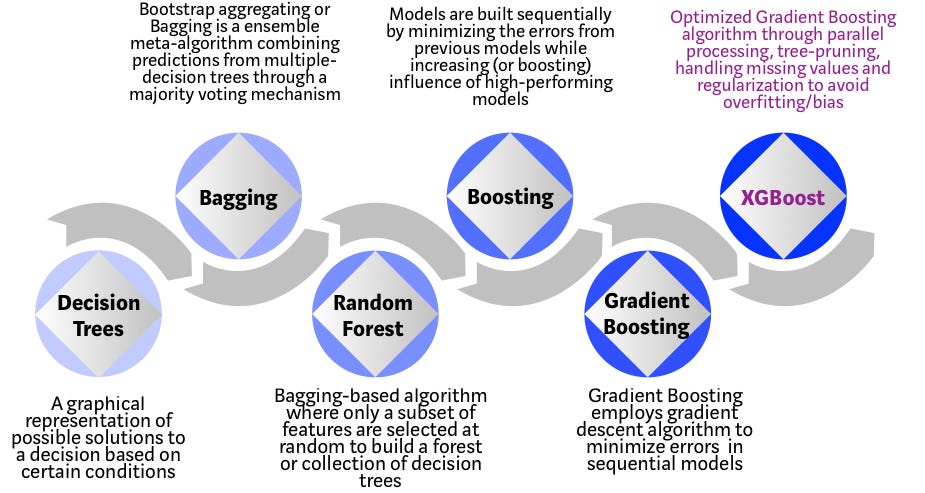

XGBoost eXtreme Gradient Boosting is an advanced implementation of gradient boosting algorithm. Gradient Boosting was developed as a generalization of AdaBoost by observing that what AdaBoost was doing was a gradient search in decision tree space aga. Over the years gradient boosting has found applications across various technical fields.

AdaBoost Adaptive Boosting AdaBoost works on improving the. XGBoost uses advanced regularization L1 L2 which improves model generalization capabilities. Extreme Gradient Boosting or XGBoost for short is an efficient open-source implementation of the gradient boosting algorithm.

Its training is very fast and can be parallelized distributed across clusters. AdaBoost Gradient Boosting and XGBoost. XGBoost is faster than gradient boosting but gradient boosting has a wide range of applications.

A Gradient Boosting Machine. Extreme Gradient Boosting XGBoost XGBoost is one of the most popular variants of. I think the Wikipedia article on gradient boosting explains the connection to gradient descent really well.

Mathematical differences between GBM XGBoost First I suggest you read a paper by Friedman about Gradient Boosting Machine applied to linear regressor models classifiers and decision trees in particular. The algorithm is similar to Adaptive BoostingAdaBoost but differs from it on certain aspects. The different types of boosting algorithms are.

Here is an example of using a linear model as base learning in XGBoost. Gradient boosting only focuses on the variance but not the trade off between bias where as the xg boost can also focus on the regularization factor. XGBoost is more regularized form of Gradient Boosting.

XGBoost is more regularized form of Gradient Boosting. What are the fundamental differences between XGboost and gradient boosting classifier from scikit-learn. Gradient Boosting is also a boosting algorithm hence it also tries to create a strong learner from an ensemble of weak learners.

XGBoost uses advanced regularization L1 L2 which improves model generalization capabilities. We can use XGBoost to train the Random Forest algorithm if it has high gradient data or we can use Random Forest algorithm to train XGBoost for its specific decision trees. Difference between Gradient boosting vs AdaBoost Adaboost and gradient boosting are types of ensemble techniques applied in machine learning to enhance the efficacy of week learners.

2 And advanced regularization L1 L2 which improves model generalization. EEG Dynamical Complexity Between Extreme Gradient Boosting Resting and Attention States Extreme gradient boosting XGBoost model was used as a We analyzed the ApEn SampEn FuzzyEn MSE and MFE of classifier to distinguish different levels of sustained attention. XGBoost computes second-order gradients ie.

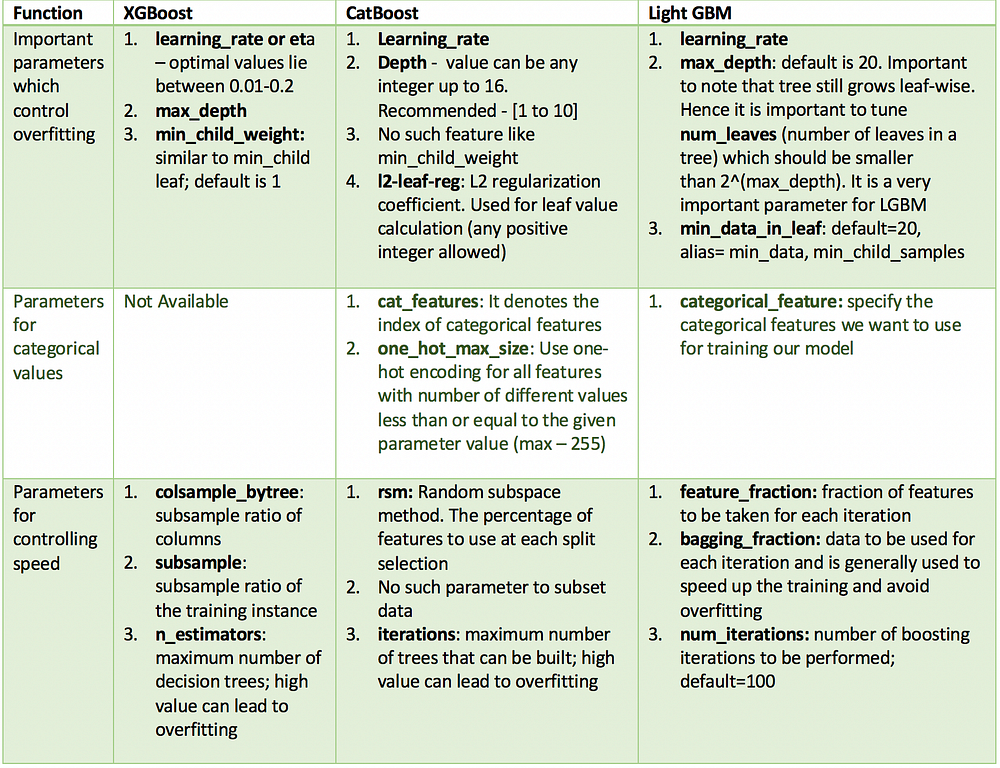

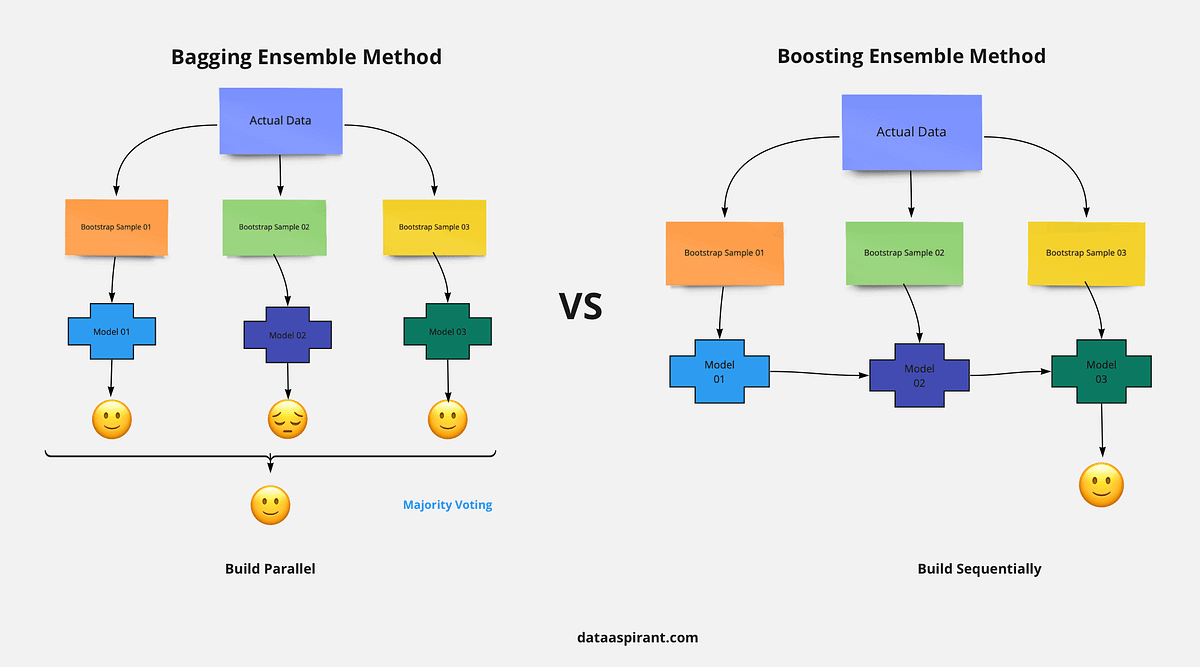

The concept of boosting algorithm is to crack predictors successively where every subsequent model tries to fix the flaws of its predecessor. Boosting is a method of converting a set of weak learners into strong learners. XGBoost delivers high performance as compared to Gradient Boosting.

The gradient boosted trees has been around for a while and there are a lot of materials on the topic. XGBoost is short for eXtreme Gradient Boosting package. XGBoost is an implementation of the GBM you can configure in the GBM for what base learner to be used.

AdaBoost is the shortcut for adaptive boosting. I learned that XGboost uses newtons method for optimization for loss function but I dont understand what will happen in the case that hessian is nonpositive-definite. Answer 1 of 10.

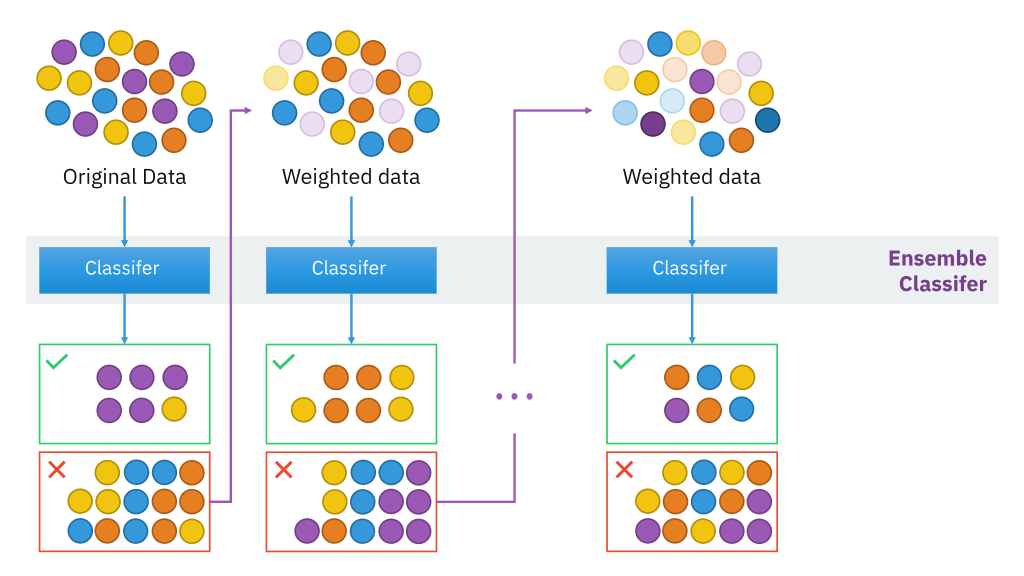

It worked but wasnt that efficient. In the advent of gradient boosted GB decision trees adaBoost XGBoost LGBM such systems have gained notable popularity over other tree-based methods such as Random Forest RF. Gradient boosted trees consider the special case where the simple model h is a decision tree.

XGBoost trains specifically the gradient boost data and gradient boost decision trees. The training methods used by both algorithms is different. Decision tree as a proxy for minimizing the error of the overall model XGBoost uses the 2nd order derivative as an approximation.

I have several qestions below. AdaBoost Gradient Boosting and XGBoost are three algorithms that do not get much recognition. Answer 1 of 2.

While regular gradient boosting uses the loss function of our base model eg. Boosting algorithms are iterative functional gradient descent algorithms. Generally XGBoost is faster than gradient boosting but gradient boosting has a wide range of application.

In this case there are going to be. Both are boosting algorithms which means that they convert a set of weak learners into a single. Gradient boosting is a technique for building an ensemble of weak models such that the predictions of the ensemble minimize a loss function.

Its training is very fast and can be parallelized distributed across clusters.

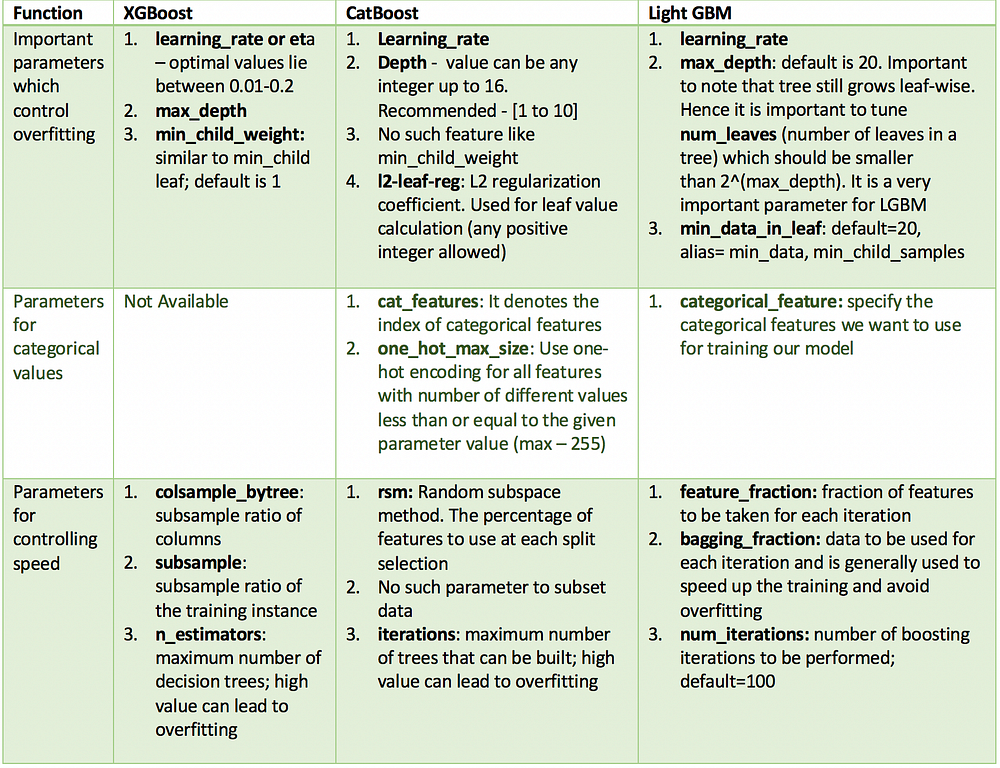

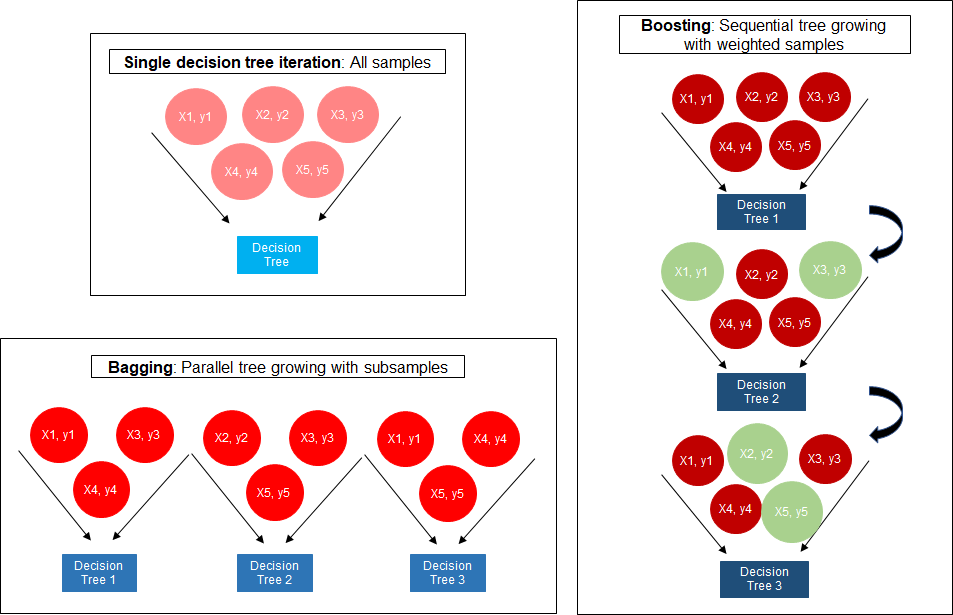

Catboost Vs Light Gbm Vs Xgboost Kdnuggets

A Comparitive Study Between Adaboost And Gradient Boost Ml Algorithm

The Ultimate Guide To Adaboost Random Forests And Xgboost By Julia Nikulski Towards Data Science

Boosting Algorithm Adaboost And Xgboost

Xgboost Versus Random Forest This Article Explores The Superiority By Aman Gupta Geek Culture Medium

Mesin Belajar Xgboost Algorithm Long May She Reign

The Intuition Behind Gradient Boosting Xgboost By Bobby Tan Liang Wei Towards Data Science

Comparison Between Adaboosting Versus Gradient Boosting Statistics For Machine Learning

0 comments

Post a Comment